On Evolution, part 1

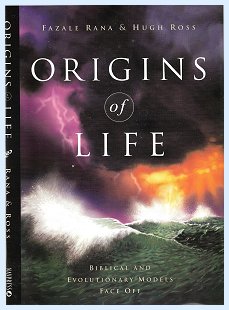

I just finished reading Origins of Life by Fazale Rana and Hugh Ross (NavPress, 2003). This is the book I've been needing for quite a while. The authors lay out all of the current theories concerning the natural (i.e. not brought about by any intelligence) emergence of life itself and basically show how the theories of various scientists have been scientifically shown to be insufficient by other scientists. It has given me a good sense overall where science is these days with regard to this issue.

A long, long, time ago, when taking Zoology 1 as a freshman Zoology major at the University of Maryland, one of the first assigned readings was an article by George Wald from Scientific American, in which he pontificated,

"The important point is that since the origin of life belongs in the category of at-least-

once phenomena, time is on its side. However improbable we regard this event, or

any of the steps which it involves, given enough time it will almost certainly happen at-

least-once. And for life as we know it, with its capacity for growth and reproduction,

once may be enough. |

One of the first lectures in the same course extolled Stanley Miller's famous experiment in which he recreated what some people thought earth's ancient environment might have been like, with just the right kind of elements and plenty of artificial lightning. At the end of a week there were some amino acids present, one of the building blocks of life.

Let me (not the book by Rana and Ross) tell you that George Wald's probability calculation is just plain wrong. This notion that, given a virtually infinite amount of time, anything that can happen is bound to happen does not work. Period. I know that a lot of people say it, but it's not true. The set of real numbers is not closed. Consequently, there is always room for novelty, and the occurrence you might wish to happen could just as easily be delayed an "infinite" amount of time.

Then, on the factual side, the book made me aware that there is nothing like 2 billion years available for life to emerge on earth. Wald couldn't have known yet, but the available time period is much, much shorter than naturalistic scientists had been counting on. So as not to spoil the book, I won't tell you how much (or, better, how little) of a window was available. But I was genuinely surprised. Well okay, I'm giving it away below.

What I also learned from the book was that Stanley Miller's amino acids were very nice amino acids. They catapulted him to fame so that some fifty years after his discovery people still mention him. But they also were very lazy amino acids that did not turn into peptide chains, proteins, cells, RNA or DNA, or anything else that would have made them "step one" in the incipience of life. In fact, it appears that all similar experiments, no matter what specific theory they are intended to undergird, have hit a dead end. Some particular substances may have been found or produced, but have always turned out to be either inviable or inert. It's as though I was going to make bhryani rice, and all the ingredients I had was a handful of cloves.

A third (and we'll make this the last) thing I took away from the book is the complexity of a cell membrane. You can't just have a knot of DNA and some mitochondria and call it an organism. They must be held together, and that's the job of the membrane. And the membrane is not just a piece of plastic wrap; it has organic functionality all of its own.

The book requires some small, but definite, preparation in understanding chemical (and to some extent biological) terminology. So, it may not be one that you would just hand out to all comers at your church's Christmas dinner. However, with a minimum of scientific knowledge (by that I mean nothing more than, say high school chemistry), it is an invaluable aid in understanding the ongoing discussion between naturalists and advocates of intelligent design. If the mere thought of science doesn't send you off screaming, you should read this book.

This is an entry I've been meaning to write ever since late October in Taiwan when I was reading Who Was Adam?: A Creation Model Approach to the Origin of Man by Fazale Rana and Hugh Ross (Navpress, 2005), a book that easily deserves five stars out of five (or ten out of ten, depending on however many stars we're allowed to allot). It is scientific on a college level with all the references to the primary sources. It is not dogmatic, but allows the facts to speak for themselves. Clearly, the book seeks to make a case for creation, and it does so without repeating the same tired slogans, such as "evolution is only a theory," or "you can breed a thousand generations of fruitflies, and you still get nothing but fruitflies."

My one regret concerning this book is that it will probably be ignored by those who would profit the most from it, but the same data on which Rana and Ross base their conclusions are out there for anyone else to examine, and the time may come when frustrated scientists find themselves so thoroughly squeezed into a corner that they cannot help but consult alternatives.

What will follow in this entry is not so much a review of the book, as thoughts precipitated by it. Yes, I remember writing not that long ago that I try to stay out of the evolution debate in this country, and, at that, please don't draw any inferences from this little piece if I'm "for" or "against" evolution. I'm "for" intelligent design and creation, but do not have good grasp on the percentage to which extent God used evolutionary processes in creating the world. If you got me figured out on this point, you know more about myself than I do. Until I tell you otherwise, this post is essentially descriptive.

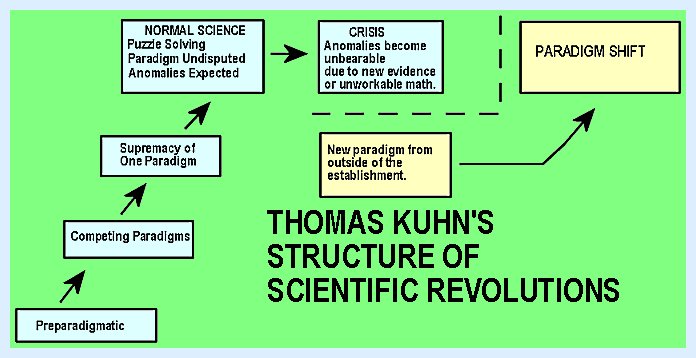

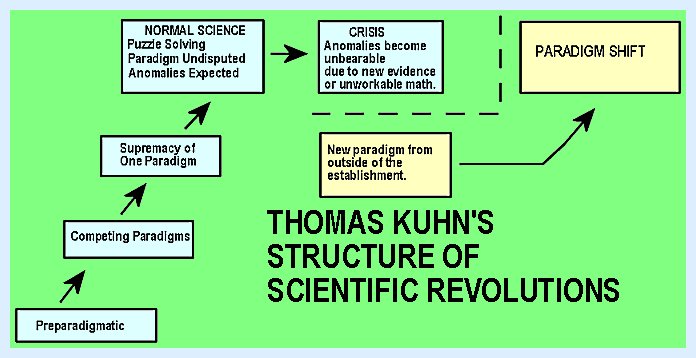

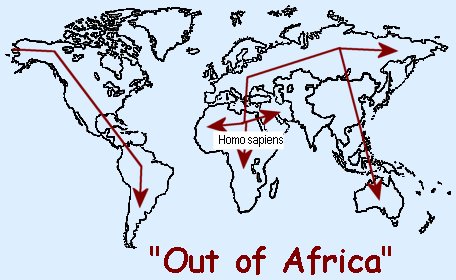

Let me begin by making reference to two approaches to the alleged evolution of homo sapiens (viz. on the non-creationist side), on which there is a lot of debate. (Again, please, my language is not giving anything away, even though it may seem like it.) In fact, it appears to me that paleoanthropology is at what Thomas Kuhn might call the "crisis" stage.

-----[Kuhn advocated a somewhat sociological interpretation of how science works. As a discipline grows, various scientists will put forth different models or "paradigms" as the basic foundation of their subject matter (cf. the state of psychology with behaviorists competing against Gestaltists, going up against Freudians, etc.). Eventually a particular paradigm will emerge (e.g., the atomic theory or the periodic table), and acceptance of it will become mandatory in the discipline. No one will be able to get a doctorate or be able to teach in the field unless they propagate the paradigm. The scientists will solve puzzles within the confines of the paradigm, and they have to accept the fact that there are some anomalies that they may not be able to solve. But usually the basic paradigm does not get questioned. However, there may come a point when the paradigm is running itself into the ground because there is too much evidence incompatible with the paradigm, or because the mathematics engendered by the paradigm becomes unworkable. If there happens to be an alternative to the reigning paradigm, which also happens to solve the issues much better, and which also just happens not to bring in too many other complications, a new generation of scientists may undertake a virtual revolution and replace the old paradigm with the new one. Furthermore, if they manage to take over the social leadership in the field (viz. to be able to decide whom to admit to academic programs, whom to hire, promote, and tenure), the new paradigm may just become the new unassailable model for the field. The old paradigm will die out together with its obsolescent adherents.]-----

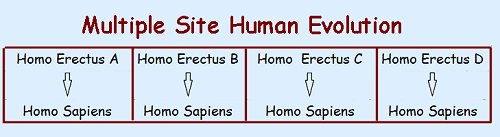

The two competing paradigms in paleoanthropology that are creating a lot of dispute, anger, vilification, and even occasional scientific discussions, are

Now, I don't want to pile up on the "multiple origins" theory, but it appears to me that it is a classic example of a scientific theory being upheld by scientists in the name of science despite its obvious violation of scientific principles. It strikes me as so obviously an ad hoc way of attempting to uphold an untenable theory that--even though it appears to be falling out of favor now--is still a great illustration of how thin the straws are to which many atheistic scientists have held, just to get out of any notion of creation. The "Out-of-Africa" theory is, if I may use the term, a real god-send to those folks because there is no way in which one could scientifically sustain a theory that says that the identical species evolved from different previous species.

And thus I come to my central point: the evolutionary mechanics of speciation. I have come across or read very few people (regardless of whether they were for or against evolution, and regardless of whether they were scientists or not, other than those who were actually involved in evolutionary biology), who really demonstrate an understanding of the process. I must thank Dr. Richard Highton, at the time of the University of Maryland, for teaching a very hard, but insightful course on evolution, which at a minimum helped me to understand the process. I'm still sorry I didn't get to avail myself of the chance to collect salamanders with him during the summer of 1967; instead I worked as a waiter at Hot Shoppes in Bethesda, Md. I had Dr. Highton in a course in basic zoology before. I shall never forget his initial comment: "I don't want to be here, and I know that you don't want to be here. So, let's make the best of this." I don't think he ever won any teaching awards. But--as usual--I digress.

The first few weeks of that course in evolutionary biology were given over to mathematics. Stipulate a population of n individuals with m genetic traits. Introduce some factor f that has the potential to change the genetic make-up of the population. Calculate what happens to the population within one generation, two generations, three generations, etc. The answer is: "nothing." Within one generation, two or three at the most, equilibrium has been reestablished and the population carries on with essentially the same old collective phenotypes and genotypes as before. (Phenotype="what you see"; Genotype="the genetic make -up that you don't see, but that determines what you see.")

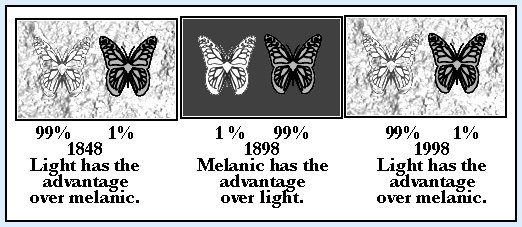

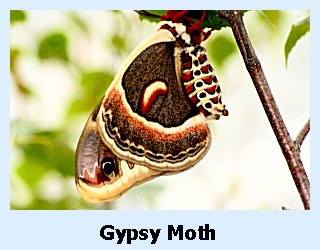

This result will obtain regardless of what is represented by the mystery factor f. Single (or even multiple) mutations, genetic drift, predation, availability of food sources, hybridization, even environmental changes will not have long-term effects on a species's genome. Take the poster child of environmental effects, the moth Biston betularia, which is native to England. It comes in two colorations, a light one and a dark--almost black--one. The latter is called "melanic" from the pigment "melanin," which gives it its dark color, and it has traditionally been considered to constitute a very small minority; representing less than one percent of the population of Biston betularia around Manchester in 1848. But then heavy industry with its smoke stacks moved in, covering all of the surrounding area with an ever-increasing layer of soot (and also being responsible for the proverbial heavy London fogs). Heretofore the light ones had the advantage of camouflage, while the melanic ones stood out visibly to predatory birds. Now the tables were reversed. As the environment was increasingly blackened, the melanic forms had the advantage of not being visible, while the lighter forms advertised themselves as ready food. Thus, fifty years after the initial count, the phenotypic numbers were entirely opposite: now the melanic form constituted 99% of the population, and the lighter form made up only about one percent. (Paul R. Ehrlich and Richard W. Holm, The Process of Evolution (New York: McGraw-Hill, 1963), pp. 125-131.

So, we had about a hundred years of survival advantage of the melanic moths over the light-colored ones. The book to which I just referred was published in 1963, and at that time, the situation was still the same, with the melanic moths dominating the light ones. Ehrlich and Holm appeared to be pretty sure that, slowly but surely, the gene pool was being altered, that it had changed already, and that the changes would become permanent ("fixated") by this process of selection unless the conditions changed. As a matter of fact, there does not seem to have been any change to the gene pool whatsoever. As soon as factories started to clean up and stopped covering the area with soot, the distribution reverted to its original percentages, without its taking several generations to undo the previous changes. Thus, even though the melanic variety had a survival advantage for a hundred years or so, the underlying genome of the population had actually not changed. Even such a drastic change in the viability of one trait in individuals over another for such a long time, did not keep the population from retaining genetic equilibrium, so that, when the time came for it to change back again, it did so immediately.

So, in this class Dr. Highton weaned us off the naive notions of how evolution works: You introduce a factor that promotes change, and a new species will evolve. It just doesn't happen that way. Why should it? Just because a certain phenotype has an advantage does not mean that the full genotype, passed on from generation to generation, should be affected. It could be affected; otherwise there could be no long-range adaptation, let alone evolution, but it would take a whole lot more than one point of incentive.

Okay, I need to amend the above statement. It is possible that the selection process goes on long enough so that the disadvantaged genotype gets eliminated totally (genetic drift). But, given the mathematics, this can only occur in rather small populations, for which the most likely outcome is going to be extinction of the species, not evolving into a new species.

Once again, what I thought of originally as a single entry is going to take at least two or three. Until I get back to this blog let me just emphasize: Do not think that what I've said above is an argument against evolution. If it amounts to anything so far, it is nothing more than to say that evolutionary processes are far more complex than usually described. All right, I will go this far tonight: seeing that Darwin did not have an inkling of what we now know of genetics, his theory was built on a philosophical presupposition rather than on scientific data. But you knew that already. More next time.

On Evolution, part 3

Wow! Am I ever bushed tonight! I hadn't done much "thera-wii" with the Wii Fit program since November (actually, none), and only before that time sporadically. I finally took it up again yesterday and today, and I can't believe how much stamina I have lost over the last couple of months. In addition, I needed to do some simple remounting of clothes poles in two closets, and I was surprised again by how little energy I have. I'll gain back some of it once the weather gets warmer, I think, but if I wind up writing incoherent prattle tonight, you'll know why.

I left off last night trying to make the simple point that it's extremely difficult to change the genome of a population of biological organisms (plants or animals). Even if you were to divide a population in half and select for a different trait in each, that does not mean that the underlying complement of genetic material has been altered. Think of it this way: There are two groups of people, each of whom owns a box with little wooden building blocks of many colors. One group uses only green blocks, while the other one limits itself to only red ones. However, each individual in both groups still has a full set of blocks and could switch to a different color if they wanted to. There are many different varieties of dogs, ranging from Papillons to Great Danes, but all of which still constitute one species, viz. they interbreed (at least in principle), as shown by the great number of "mutts" in the world. In fact, since for a lot of organisms one can't put representatives of two groups into a cage to see if they will copulate, one has to look for hybrid intermediates in nature to make the somewhat subjective judgment whether there are two species or only one.

| "We could not understand a lion even if he

could talk." Wittgenstein. "Lions don't understand Wittgenstein." Me. |

So, how does speciation actually work? Popular TV shows tend to ascribe intentionality to the organism. Imagine a documentary on lions in the Maasai Mara. A male lion is roaming through the bush and comes across a female with some cubs. The announcer intones: "This is a dangerous situation for the young lions. The old male is most likely going to kill them because he seeks to advance his own genetic offspring rather than that of another lion." --- Really? That's an awful lot of abstract thinking ascribed to the lion who is acting out his instincts. I guarantee you that the lion is not contemplating notions of the reproductive superiority for his own genetic stuff. He doesn't even know what those things are. Whether a drive toward "genetic egotism" is a valid inference about the lion's actions by a scientific theorist is neither here nor there. The lion is not familiar with evolutionary psychology.

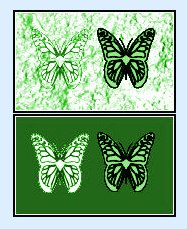

Nevertheless, again and again descriptions of evolutionary developments ascribe teleology to the organisms. The following quote comes from what I believe is the very best book on butterflies, and I heartily recommend it if you have some serious interest in Lepidoptera. But look at the intentionalist language that the author uses:

But why do most butterfly larvae [caterpillars] eat the plants of only a few genera or families? The best model for the answer is a perpetual �war� between insects and plants. Ancient insects ate ancient plants; in response, the plants evolved thick, tough, tooth-edged leaves that carry spines or hairs, hooked hairs, gums, resins, high levels of silica, and even raphides (long crystals of calcium oxalate), all of which physically deter animals from eating the leaves. Plants have also evolved chemical defenses against attack. . . . Along the way, then, plants that were edible to many animals were eaten and extirpated, and only the plants with defenses remained. By evolving one or more of these repellents, a plant could survive all but the few insects able to withstand its defenses and poisons. The few remaining insects then often evolved the ability to use the plant�s own poison to find it, for the poison is generally a reliable indicator of plant identity (James A Scott, The Butterflies of North America: A Natural History and Field Guide [Stanford, CA: Stanford University, 1986], pp. 65-66.

and a little while later:

Some butterflies have adopted special tactics to prevent their own extinction. The mate-finding habits of some species tend to prevent extinction: at low density, for example, mating proceeds efficiently at a small rendezvous site such as a hilltop, whereas at high density there are too many males to operate from the same small hilltop, and some of the mating according occurs elsewhere, at lesser efficiency (Ibid., p. 105).

This is interesting stuff, but somewhat misleading. Anthropomorphisms aside, is there really evidence that there was a time when all insects ate all plants before the plants developed their defenses? It follows from the general theory, but I'm not sure how one could even prove such a contention. Regardless, let's agree that organisms do not decide whether or how they are going to evolve themselves.

The basis of the method of evolution is the interaction between genetic mutations and the environment. If it is going to happen at all, the situation must meet the following conditions.

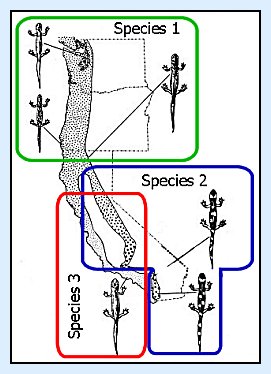

A good example of how this process might work is demonstrated by the work of Lewis Stebbins on the salamander of genus Ensatina. Stebbins and his co-workers collected specimens of Ensatina all along the West Coast. The following picture emerged:

Well, this is going to take at least one more installment. Something else is missing from what I've said so far if this process is supposed to move from the hypothetical to the actual. Can you figure out what that would be? Let me know what you think before I tell you the next time.

On Evolution, part 4.

Well, it's time to finish my thoughts that were sparked by reading Who Was Adam by Rana and Ross. I left off by saying that there was a missing element in my description of the process of evolution up to that point. Thanks to Cousin A. for having the courage to venture a guess in attempting to read my mind. Of the three points he made, it's the first one that I was contemplating. As for the other two:

A. commented: "This would occur if the chromosomes are not "compatible", i.e. having nothing to do with appearance. I have yet to see a mechanism that can explain how this can happen."

My response: You are probably wanting to know what changes happen directly on the chromosomal level, and I don't know that anyone has clarified that. Still, at least it seems as though we have a more surface model in Stebbins's work. We don't conclude that there are two species by examining the chromosomes, let alone by the organism's outward appearance, but by whether they interbreed. If they don't, we have two species. Due to the "reproductive isolation" of the two halves of the Ensatina population, in southern California that's exactly what appears to have happened. Of course, this is a rather murky situation because interbreeding takes place on the north-south axis for either group and on the east- west axis about half- way down the line of separation. Still, I can't get around the fact that, if the central population were eliminated, there would be no question that we were looking at a total of three species: one northern one and two southern ones. Admittedly, this is a hypothesis contrary to fact, but it seems to me that it illustratres a plausible mechanism.

A's comment in nuce: "I have yet to see an example of ANY mutation that is genuinely beneficial."

My response: My hunch is that the probability of a beneficial mutation occurring would be pretty low, but I don't see how one can make a universal judgment either way (viz. that either there are some or that there are none), except on the basis of an a priori assumption. That there are mutations is beyond question, and I can't rule out that some of them could be of benefit to an organism. Sticking to humans as example--and I know that this is precariously close to an argument from silence--I suspect that we wouldn't even recognize beneficial mutations as such; we would only recognize that someone is exceptionally strong, beautiful, smart, or whatever. As the human genome continues to be studied, we will learn a lot more.

A's comment: "A change dramatic enough to require drastic adaptation statistically will kill the organism long before it has had time to figure out how to change. If it can adapt quickly, then no genuine (chromosonal) change is required, and hence no 'evolution'."

My comment: I would put it this way. The organism would not be able to adapt to the change in the environment unless it already contained the genetic basis for the required adaptation.

Let's go back to the much- touted story of the two varieties of the moth Biston betularia, the light- colored one and the melanic (dark) one. As the soot settled over the industrial regions of northern England, the moths did not have to wait for one of them to start mutating a suitably melanic form, but melanism was already in its genetic arsenal. In fact, from what we could tell at the end of the twentieth century, apparently the genome of the population never did get changed. All that happened was that for a time one variety was favored over another. Given the reversal of proportions, from 99/1 to 1/99, it would appear that, if it had not been for the melanic mutation, which was already present, Biston betularia would have become yet another species in the "extinct" column of the ledger.

Or let's consider a case on a much larger scale. If I remember correctly, grazing animals evolved after browsing animals because grasslands appeared later than leafy plants. (If I got this wrong, we can retell the story in reverse.) So, let us stipulate a population of proto-deer, which has been happily dining on leaves for quite a while. Its neighbors are the tiny little proto- hippocampi who are grazers, but unfortunately for them, there is nothing yet for them to feed on. Well, that wouldn't work, so the proto-hippocampi must have been browsers as well. But they're really too small to be browsers, so let's just forget about them for the moment.

Now grass appears. So do all those grazing hippocampi. Where did they come from? This is getting confusing. Let me start over.

Now grass appears. We have two options. Either

In either case we have a problem. In the first case, the probability should be that the browsers would die out long before there has been enough time for any of them to evolve into grazers (including the hippocampus, regardless of what his forebears were eating). Since that didn't happen, the adaptation must have taken place in an unbelievably short time, much shorter than similar evolutionary developments among other animals. The fossil record, as reconstructed nowadays, seems to bear this out. Given the evolutionary time scale, the development of the hippocampus into the horse happened in a very short time. So short, in fact, that the only way in which one can explain the rapidity of the change is by inventing new nomenclature to cover it. It was tachytelic (extremely fast) evolution, as opposed to bradytelic (extremely slow) evolution. Unfortunately, as always, finding new terms to describe a phenomenon does not help us much in understanding the phenomenon.

Under the second possibility, where the grass is simply an addition to the leafy plants, there is insufficient reason for the browsers to evolve into grazers, which they obviously did. In fact, it was not just perissodactyls (hoofed animals with odd numbers of toes), such as horses or rhinos, that showed up and started to feed on grass, but some members of the originally browsing artiodactyls (hoofed animals with even numbers of toes), such as bovines, switched over to grazing as well. Furthermore, as we just said, the fossil record shows us that they did so in record time. Once the grass appeared, grass-eaters appeared right along with it.

So, let us summarize, adopting an evolutionist stance: Once upon a time there were only browsing animals and no grazers because there were no grasslands yet. When grass evolved, it opened up a whole new niche, which was filled in unusually short time by newly-evolving grazing animals, as illustrated by the evolution of the hippocampus into the horse. However, it is also a logical necessity that, in order for this rapid adaptation to take place, the ancestors of the grazers must already have had the genetic disposition to evolve in that direction. According to evolutionary theory, organism are supposed to be genetically determined as to what nourishments they need and take. They don't just mutate into the direction of trying a new food source because they want to. Or, for that matter, because one of them tried it and liked it, and so they all followed suit. You just can't have it both ways: either the genetic determinism holds, in which case you need to have the change in genes occur before the change in behavior, or you need to beg out of one of the main planks that upholds the theory of evolution: viz. that changes in the genes are sustained in response to changes in the environment.

Now, we agreed last time that neither animals nor plants decide for themselves how they are going to evolve. But if you look at the directions in which the gene pool moved, it would be much likelier that either nothing changed or that all of the browsers should have gone extinct than that grazers should appear and that they should do so at such a rapid rate. It is not possible to look at the events in the history of evolution, whether we are talking about the emergence of grazers or the "warfare" between butterflies and their host plants, without recognizing some sort of directionality. The evolutionists themselves provide the best examples thereof, as demonstrated by Scott's exposition of the interaction between butterflies and plants, which I quoted yesterday. Remember:

I know that scientists like Scott don't mean such

anthropomorphic or intentionalist language literally. My point is that looking at the facts

and taking the probabilities into account, it's virtually unavoidable to see directionality.

I know that scientists like Scott don't mean such

anthropomorphic or intentionalist language literally. My point is that looking at the facts

and taking the probabilities into account, it's virtually unavoidable to see directionality.

And thus the time has come for me to go out on a limb (by which I do not mean that I am going to devolve into a brachyating anthropod). What you see is what you get.

Evolutionary adaptation requires that the mutations necessary for the adaptation are already present in the genome. That requirement entails that there is an intentional directionality in the evolutionary process. |

First of all, if you start from the perspective of creation in six twenty-four hour days, none of what I've said has anything to do with your position. I'm not advocating evolution, but I'm describing what would be entailed in a theory of evolution.

Second, if you hold to some form of progressive creationism under some name or other, I trust that these observations contribute to your position.

Third, even if you take any idea of creation out of the picture, I contend that it is still not possible to describe the evolutionary process without recognizing some form of directionality. If you prefer to think or speak of it as some unknowable, unutterable, mysterious force, that's up to you, but I think that the idea of a personal infinite God as Creator is more rational.

Fourth, let me come back around to my initial point. I've tried to call attention to all of the difficulty and complexity involved in speciation. My suggestion is that, for all practical purposes, it is unavoidable to look at the process and not see directionality along the way. Consequently, the evolution of homo sapiens simultaneously from different species of Homo, viz. Homo erectus A, Homo erectus B, etc., is utterly unsustainable. In fact, it appears to me that it is becoming increasingly likely that the notion of intelligent design, regardless of how phrased, may just be on its way to becoming the new paradigm.

I haven't heard from anyone who knows of or has written a reponse to a recent article on the origin of life in Smithsonian, so hopefully I'm not just duplicating someone else's labors. The article in question [Helen Fields,"Before There Was Life"Smithsonian 41,6 (October 2010):48-54; photographs by Amanda Lucidon] is actually as much about the mineralogist Bob Hazen as about the scientific issues with which he concerns himself. The article portrays him as a person who can develop enthusiasm for many areas of study, who loves to play music, and who, together with his wife Margee, enjoys amassing collections (including one of thousands of trilobites), which they then donate to publicly accessible displays. Hazen receives his regular pay envelope from the Carnegie Institution for Science in Washington, D.C., where he does research utilizing high- pressure, high- temperature equipment.

A little more than a year ago, I wrote an entry in which I expressed my appreciation for the book Origins of Life by Fazale Rana and Hugh Ross (NavPress, 2004). If I may quote myself: "[The book] has given me a good sense overall where science is these days with regard to this issue." Where science is, is stuck. If one scientist comes up with a new wrinkle on a method in which life may have originated spontaneously; other scientists immediately apply the starch and iron out the wrinkle.

The Smithsonian article quotes Hazen as saying, "A few years before, we would have been laughed out of the origins-of-life community." (50) The fact that he does not activate the risibility of his fellow scientists could be due to the significance of his work. It could also be due to the fact that this so-called community is desperate for any notion, no matter how bizarre, that will provide them with a possible idea of how life may have originated on the basis of purely naturalistic events. Furthermore, as Hazen himself avers, NASA is happy to cooperate with origin-of-life endeavors, which will presumably result in funding for both partners. The cold hard truth appears to me to be that Hazen has not yet made any contributions, which advance the research significantly.

Please note the words "not yet" in the previous sentence. This expression could be a sign of a realistic assessment, leaving the door open to something marvelous making a sudden appearance in the future. On the other hand, it could also be an indicator of unbounded optimism, conveying the attitude that it's only a matter of time before the significant epiphany will occur. My use of "not yet" is in the former sense; articles of this nature tend to reflect the latter attitude.

It is of serious benefit to some areas of contemporary science that the languages of most popular writers on science contain a future tense and a subjunctive mood. A lot of articles written for the public glide smoothly from what a scientist has discovered to what will be proven as the next step of their work, and this article constitutes no exception, as I will show after I have explained Hazen's work a little more.

Let us start with a working definition of life; there is no universal agreement on how it is phrased, but the outcome tends to be pretty much the same: We know that we are looking at a living organism when it consists of proteins and it reproduces itself. At its most simplistic, to have protein you need to have peptide chains, and to have peptide chains, you need to have amino acids. So, where can one get amino acids on a planet that is supposedly still in the process of formation, billions of years prior to the emergence of anything that we would recognize as "nature"? Lots of places, as it turns out.

In 1952, Stanley Miller recreated what he imagined to be the conditions of earth in its early, pre-life stage. This is the primordial soup, which scientists now know to have been extremely thin, if it existed at all. Miller sent electric sparks through the mixture, and--to everyone's surprise (except maybe Miller's)-- some of the elements in his concoction interacted so as to form amino acids. But that's as far as Miller's experiment went. He could not find a way in which, still trying to simulate natural conditions, he could persuade the amino acids to combine any further to take the next step to becoming a life form.

And Hazen has not gotten any further. What he has done (the "breakthrough," as Fields calls it) is to show that amino acids can be recreated in environments other than Miller's hypothetical early earth. One of the theories of the naturalistic origin of life is that the necessary building block molecules may have been produced at thermal vents in the ocean. These are springs at great depths in the sea that release extremely hot water at extremely great pressure. Hazen has simulated those conditions in containers called "pressure bombs," and, starting with the right kinds of elements and precursor molecules, after some time amino acids and some other organic (i.e. carbon- based) compounds have appeared. And thus, he has wound up pretty much where Miller was fifty-eight years ago: with amino acids that have little inclination to do any further work.

There are some further problems of which Miller was not aware back at his moment of temporary triumph, but that Hazen has to take into account.

Hazen stipulates that the key to raising the probabilities may lie with the presence of minerals. To quote from the article:

"'So the chances of a molecule over here bumping into this one, and then actually a chemical reaction going on to form some kind of larger structure, is just infinitesimally small,' Hazen continues. He thinks that rocks--whether the ore deposits that pile up around hydrothermal vents or those that line a tide pool on the surface--may have been the matchmakers that helped lonely amino acids find each other. . . . An amino acid that drifts near a mineral could be attracted to its surface. Bits of amino acids might form a bond; form enough bonds and you've got a protein." (50 -51, emphasis mine)

Now I'm going to make a comment that will appear to be unkind and then try to show that the comment is not quite as unkind as it looks at first glance: We see here that if you ignore the problems (e.g. the survival of the molecules) and gratuitously stipulate the necessary assumptions (e.g., the availability of ammonia), you can achieve great results in the subjunctive mood. So, I'm saying in so many words that Hazen solves a scientific problem purely theoretically. But what (maybe, to some extent) takes the sting out of that evaluation is the observation that this has been a philosophical, theoretical problem all along. The last paragraph of the Smithsonian article begins:

"Everywhere he looks, Hazen says, he sees the same fascinating process: increasing complexity. 'You see the same phenomena over and over, in languages and in material culture--in life itself. Stuff gets more complicated.'"

Really? That statement is at least disputable, if not downright false. I can't pass judgment on what Hazen sees, but I can assess the truth of the existence of the phenomenon to which he refers. Unfortunately the statement is too vague to evaluate it completely, but one item is clearly wrong: languages definitely simplify over time. And here's a debatable question: My normal transportation consists of a car with an automatic transmission. Does this state of affairs represent greater or lesser complexity than if it had a standard transmission? Whose point of view is decisive: the driver's or the engine builder's? What if I usually travelled by horse and buggy and had to maintain a horse? Would things be more or less complex compared to maintaining a car? My point is that Hazen is expressing a subjective point of view, and he sees what he has been trained to see. I must confess that I don't see what he sees. My life now is relatively complicated. But it would be rather arbitrary for me to say that it is more complicated than if my survival depended on the contingencies of a hunter-gatherer economy. For that matter, I cannot imagine anything more complicated than the naturalistic methods scientists propose to somehow get those molecules generated, bonded, bonded some more, and then to reproduce themselves. But if the door remains closed to the idea of creation, where else can you go than into this mythological realm of science- in-the-subjunctive?

Jimm's comment on last night's entry sent my mind (which is not identical with the chemical events in my brain) in the other direction. It really doesn't bother me too much that God may have a bad rep among atheists. However, it occurs to me that the advocates of a naturalistic origin of life are in a real double-bind, and they don't know nearly as much science as God does. Even were someone to come up with a highly plausible mechanism by which the first life forms may have originated, that would still not prove that things really went that way in the past (whether six thousand, ten thousand, or 3.85 billion years ago), nor would it rule out that all those chemical events occurred at God's direction. Recall, if you would, the earlier discussion. My point--anticipated by Cousin Alfred-- was that no organic change can survive if the preconditions for sustaining it do not exist beforehand. Under that scenario, it gets to be extremely hard to think of large-scale adaptations or innovations without some intended directionality.

But finding a place for God in the scheme of things is not a great concern for me, whether it be in this context or some other issues. As long as there are contingent entities in the world, there is plenty for God to do. (Another reason why it's time to put the overspecified kalām argument back to bed.) It just so happens that the nature of the universe is such, namely actualized potential, that without a Creator and Sustainer it could not exist, and the nature of living organisms is a highly refined instantiation of this point.

What bothers me are the self-hypnotically induced mirages within the so-called origins-of-life community, which are then published to the world as scientific facts. And from there, as Jimm says, these "facts" are likely to be tested as ammunition against God, as it were--specifically nowadays by the "new atheists." But even apart from that possibility, the reading public enjoys learning about Mr. Hazen and his many interests (I did) and takes in the scientific backdrop as just as factual as the location of his weekend cottage.

I'm not implying intentional deception by either the scientists,the editors, or the writers, such as Ms. Fields . She pens a human-interest story about this somewhat distinctive man who, to his own great surprise, finds himself on the cutting edge of "origins-of-life" science. In writing her article, she highlights his acclaimed achievements because that's what good authors do. (Smithsonian is not "60 Minutes," thank the Lord!) She maintains a distance between herself and the subject of her essay, injecting quotes at the appropriate points because she is, after all, neither the expert that Mr. Hazen is nor his evangelist.

Mr. Hazen has spent a great part of his life on the contemporary equivalent of the quest for the "philosopher's stone," and really has no other place to go than to continue along that line--or be laughed right out of the Carnegie Institution and the joint grant with NASA. A de-granted scientist is easily as bad a thing to be in today's society than it was at one time to be a de-frocked priest.

The editors are delighted to be able to publish a story that seems to fit in well with the "Smithsonian" image. It's unique in its own way, and it combines interest in a specific person with his scholarly endeavors. Freedom of the press and free speech are wonderful blessings!

So, why nit-pick this article? Because, I, too, have the same freedom of expression, and I think it would be helpful to point out that at the core of this story is an all-too-casual approach to the real world in which there is a much more marked line between what is today and what may be tomorrow.

All (or, perhaps much) of the necessary bibliography is already provided by Ross and Rana, so you don't have to start with an index search looking for articles that contain words of which you can't know until you have the article in front of you. People can consult R&R's book and, if they so desire, they can check out the original sources. Science is done in public (for the most part), and--even if you can't follow all of the technical stuff--a scientific article is supposed to mention the point it is trying make. The problem from my perspective is that far fewer people are going to read Ross and Rana, let alone pursue the sources, than will read Smithsonian.

People enjoy novelty. The idea that God created the world and living beings has been around ever since Adam. No matter how futile, a scientific quest may consist of many fresh steps, which are just around the corner, at least according to popular magazines. Back in their day, the Roman Emperors would keep the populace happy with free bread and circuses. Now our government entertains us by funding science-in-the- subjunctive.